Most AI strategies fail because they start with technology.

I’ve spent 13 years watching enterprise tech adoption from the inside. The pattern is always the same: a new capability arrives, leadership gets excited, a strategy gets written, pilots get funded. Then reality hits.

The technology works fine. The process it was supposed to improve? Nobody actually understood it well enough to automate.

The Pattern Nobody Talks About

Every technology wave — cloud, microservices, now AI — follows the same four-year curve:

Year 1: “This changes everything.” Executive excitement peaks. Budget gets unlocked. Consultants arrive.

Year 2: “Why isn’t this working?” Pilots stall. The gap between demo and production turns out to be enormous. People start quietly questioning the investment.

Year 3: “Maybe we were doing it wrong.” The hype people leave. The operators who actually understand the work start rebuilding — smaller scope, real problems, less fanfare.

Year 4: Real value shows up — in places nobody predicted during Year 1.

AI is somewhere between Year 1 and Year 2 for most enterprises right now. The companies that will win aren’t the ones with the biggest AI budgets. They’re the ones who survive Year 2 without pulling the plug.

Start with the Workflow, Not the Model

The companies getting real ROI from AI right now share one thing in common: they started with a painful, well-understood process — not with “where can we apply AI?”

Here’s the difference:

Bad starting point: “We need an AI strategy. Let’s identify use cases.” This produces a brainstorm list of 50 possibilities, three pilots, and zero production deployments.

Good starting point: “This approval workflow takes 6 people, 4 days, and breaks every time someone’s on vacation. Can we fix it?” This produces a focused problem with measurable outcomes.

The first approach sounds strategic. The second approach actually ships.

Why This Keeps Happening

Enterprise leaders aren’t stupid. They’re pattern-matching from previous technology waves — and the pattern they’ve learned is: “Get ahead of it or get left behind.”

That’s not wrong. But “getting ahead of it” doesn’t mean buying tools and launching pilots. It means understanding your operations deeply enough to know where automation will actually stick.

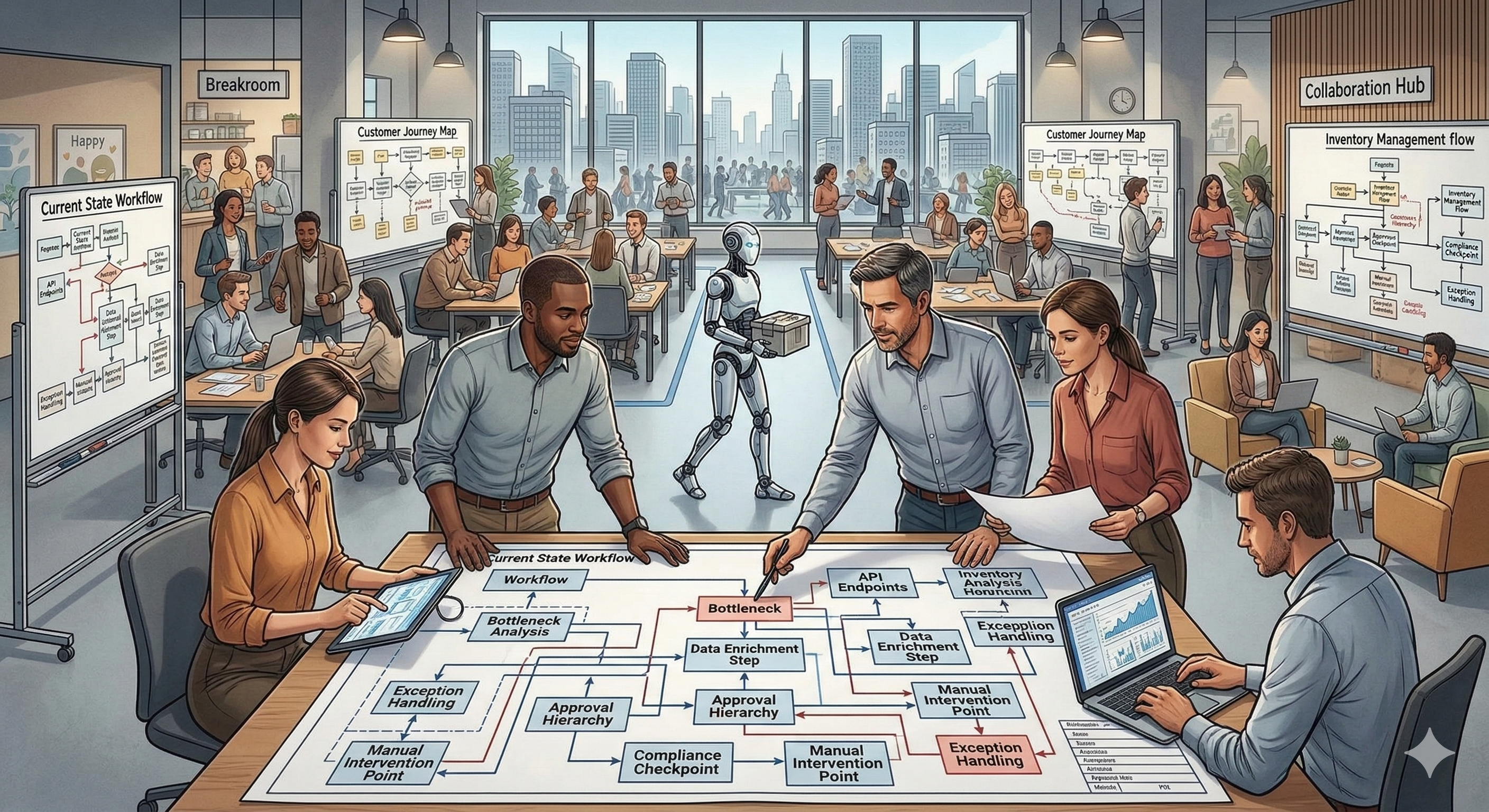

If you can’t explain a workflow on a whiteboard — who does what, when, and why — you can’t automate it. Not with AI, not with anything.

What Actually Works

After watching this cycle repeat five times, here’s what I’d tell any leadership team:

Map before you model. Document the actual workflow. Not the org chart version — the real one, with the workarounds and the tribal knowledge. This is where AI opportunity lives.

Start with pain, not potential. The best AI use case isn’t the most impressive one. It’s the one where people are already frustrated and the process is well-enough understood to automate.

Measure outcomes, not activity. “We trained 500 employees on AI tools” is activity. “We reduced approval cycle time from 4 days to 4 hours” is an outcome.

Plan for Year 2. Budget for disillusionment. The pilot will succeed. The rollout will be harder. Build that into your timeline and your leadership communications.

The Operator’s Edge

The people best positioned to drive AI adoption aren’t the data scientists or the consultants. They’re the operators — the people who know where the process breaks, who the workaround depends on, and why that one spreadsheet has been the real system of record for 8 years.

If you’re one of those people, your domain knowledge just became your most valuable asset. AI doesn’t need more strategies. It needs more people who can point it at the right problems.

What’s the one process in your organization that everyone complains about but nobody owns? That’s probably where AI should start.